Raise the AI Levee

Scrutiny is a scarce resource in the age of AI. Here's how to treat it like one.

In my last piece (The AI Watershed), I made a provocative claim: AI didn’t free up your team’s bandwidth - it filled it with work that nobody budgeted for.

The cost to produce analysis hit zero. The cost to make good decisions kept climbing. The filter that used to sit between the two is simply gone.

There’s a name for that: Chesterton’s Fence.

Chesterton’s Fence: Do not remove a fence (or change a policy/tradition) until you understand why it was put up in the first place.

The philosopher G.K. Chesterton argued that the most dangerous reforms are the ones that dismantle something without first asking what it was doing. If you can’t explain something’s purpose, you don’t get to take it down because the fence exists for a reason, even when that reason has become invisible over time.

AI never asked what the friction was for; it just quietly removed the effort that had been filtering your org’s analytical work for decades. This unintentional filter was a byproduct of how hard the work used to be. Commissioning a bad analysis used to cost something. Now it costs nothing. And organizations are paying for that in exactly the currency you’d expect: the attention and judgment of the people responsible for making decisions with it.

The obvious fix - a new intake form, a review process, another gate - won’t hold. Motivated people with low-cost tools navigate around gates like Magellan around the globe. They always have. What you need isn’t another fence. It’s a levee - something designed not to stop the flow, but to manage it.

Three things to build the levee: triage, culture, and calibration at scale.

1. Triage before you treat

During World War II, the U.S. Army faced a version of the problem every finance leader is dealing with right now: too many demands, not enough resources, and decisions that couldn’t wait. Their solution wasn’t to add more surgeons, but to leverage the power of subtraction via a simple sorting system - triage, from the French word for sorting commodities like wheat or coffee beans into categories based on quality - that ensured scarce medical attention went where it could do the most good.

The genius was the simplicity. A handful of rules, applied consistently at the front of the line, before any treatment began. Patients who could wait, waited. Patients who couldn’t, didn’t. Sorting using these simple rules ensured that scarce resources were brought to bear where they mattered most - on the cases that had a shot, but only if they got immediate attention.

That’s the model for managing scrutiny in a finance org where AI has made analysis effectively free to produce.

In Upstream or Underwater, we discussed how the best operators move faster by filtering. The village that kept pulling bodies from the river was heroic and exhausted until someone decided to go upstream. Most finance teams right now are in the river, reviewing and correcting AI-generated work after it’s already in circulation. The gate has to move upstream to the questions that the team is trying to answer.

Before any analysis gets commissioned, three things need to be true.

What decision does this serve - not what would be interesting to know, but what specific decision, made by whom, by when?

What’s the materiality threshold - could the answer actually change what we do, or are we confirming what we already know?

Who owns the output - not who’s running the prompt, but who is responsible for the quality of the thinking behind it?

If those questions don’t have answers, the analysis doesn’t start.

Will this require a form at first? Probably. Not because forms are the goal - they’re not - but because the muscle doesn’t exist yet. A simple checklist makes the standard visible and repeatable while the team builds the instinct to ask these questions automatically. The goal is the moment the form becomes unnecessary. That’s when you know you’ve rebuilt what friction used to do for free.

The Army didn’t solve mass casualties with complex algorithms. They solved it with a handful of simple rules applied consistently before any treatment began. Finance leaders need the same thing - not a perfect system, but a repeatable one.

2. You are the checks and balances

In 1999, NASA lost a $125 million Mars Climate Orbiter because one team was using imperial units and another was using metric, and nobody detected the discrepancy across a 500-million-kilometer voyage. The probe hit the Martian atmosphere at the wrong angle and disintegrated.

The post-mortem was pointed: the problem wasn’t the failure itself, but the failure to detect it in time.

The challenge in complex work is that it’s typically multi-threaded. Everyone works together, but not everyone sees how it all comes together. The crash was the result of not seeing across the seam between two teams working in parallel and of not asking the question that would have caught the mismatch before it became a crater.

We used this story in Gravity Wells to show how even great teams drift off course through small deviations that compound quietly. It applies here, too.

AI-generated analysis is the unit mismatch problem at scale. The output looks right. The charts are clean. The conclusions are confident. There’s no visible seam between “this was rigorously built” and “this was prompted into existence in four minutes by someone who didn’t fully understand the question.”

Unless someone with genuine context actually scrutinizes the work - not just reads it - the business drifts off course while everyone nods along.

Ownership in an AI-enabled finance org means one thing: you are the checks and balances. Not the AI. Not the process. You.

The commissioner of any analysis is responsible for the quality of the thinking behind it, regardless of what tool produced it. Your job is to get comfortable with the thinking underlying the output, regardless of which team produced it. That means understanding the methodology well enough to defend it, knowing the assumptions well enough to stress-test them, and being willing to say out loud: "I'm not confident enough in this to act on it yet."

That last part is harder than it sounds. AI produces outputs that feel certain - declarative language, authoritative formatting, confident conclusions. Admitting uncertainty in the face of a polished deck takes a kind of intellectual honesty that has to be modeled from the top before it shows up anywhere else. When the CFO says, "This analysis isn't ready," the team learns what ready actually means.

The goal is to build a culture where iron sharpens iron. Where the instinct to scrutinize is as natural as the instinct to produce. Where AI is what it's supposed to be: a multiplier of good thinking, not a substitute for it.

The Orbiter crash resulted from one person failing to ask: Are we sure we're using the same units? Sometimes the most important thing a CFO can do is name what nobody else is willing to say before the business acts as if it can see when it can't.

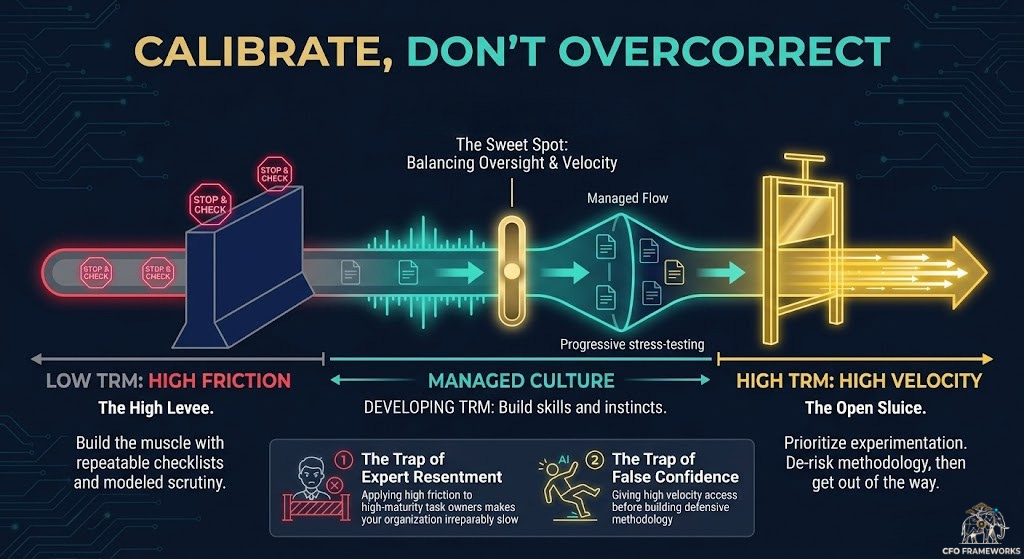

3. Calibrate, don’t overcorrect

Build the levee too high, and you stop the flow entirely. Teams stop experimenting. People ask permission before prompting. The org gets slower and more cautious at exactly the moment it needs to be faster and more capable. You’ve traded one problem for another, and the AI advantage you were trying to protect evaporates entirely.

In The Dangers of Playing it Safe, I made the case that conventional thinking is a guaranteed way to underperform. A finance org that over-indexes on caution around AI will make fewer mistakes in the short term and fall irreparably behind in the long term. Playing it safe isn’t playing to win.

The better frame is Andy Grove’s concept of Task-Relevant Maturity - how much oversight you apply should depend on where someone actually is in their capability, not on their seniority or tenure. When TRM is low, you lean in. As it builds, you pull back. When it’s high, you get out of the way.

Apply that inside the finance team, and the path is clear. Someone who’s been using AI tools thoughtfully, stress-testing outputs and building their own instincts about where the tools fail, doesn’t need the same oversight as someone who just discovered that ChatGPT can build a financial model. Applying the same gate to both builds resentment in the former and false confidence in the latter.

Outside the finance team, the challenge is harder. You can’t audit every function’s AI output. Rather, you have to find leverage in others - identify the champions in each function who are already using AI thoughtfully, invest in them, and let them set the standard for their teams. If they don’t exist, fund AI hackathons to build excitement and velocity. Encourage experimentation openly so that uncertainty surfaces rather than gets hidden. The worst outcome is a team that makes a mistake and buries it because they’re afraid to admit they weren’t sure what they were doing.

As we talked about in A Light Touch, the goal of good leadership is to make yourself increasingly unnecessary. Build enough capability and cultural expectation that the instinct to scrutinize becomes self-sustaining - where the triage questions get asked automatically, where nobody circulates an analysis they can’t defend, where iron sharpens iron without you having to hold the blade.

The levee stays up.

Orgs evolve. People turn over. New functions adopt AI at different rates and with different levels of sophistication. The infrastructure you build now will need to be maintained, updated, and recalibrated as the landscape shifts. The work gets easier as the culture takes hold - but the guard stays up.

A levee that isn’t maintained is a dam waiting to fail.

The levee is the infrastructure that makes everything else possible. Build it well, maintain it honestly, and the water rising around you becomes what carries you forward.

Give yourself some grace

None of this happens overnight.

What’s described above is a lot. It’s a shift in culture, process, and leadership posture that runs counter to the “move fast” pressure every finance leader is under right now. Like every technological shift, the emergence of AI took a fraction of the time it will take to incorporate it meaningfully.

The org that asks the three triage questions 60% of the time is meaningfully better than the org that asks them 0% of the time. The CFO who models scrutiny in two cross-functional reviews a month is building something that the CFO who burns bridges with their stakeholders by implementing it in all of them never will.

Progress compounds. Start where you have the most influence. Build the muscle where it’s easiest to build first. Let early wins set the cultural expectation. Leverage what already works in your org rather than importing a framework wholesale.

AI ripped down the fence, and now you know what to build in its place. The rest is just doing the work, one deliberate decision at a time.

Before you go

If this resonated, I had a chance to dig into some of these ideas live on the 10x Finance Podcast with Albert Ghazi at Aleph. We covered why alignment is overrated, the “no sevens allowed” rule, and I touched on the AI cost-to-consume argument that kicked off this whole series. If you want to hear it in conversation rather than in print, it’s worth a listen.

If you’re interested in a candid look at the playbook I’ve used to turn Dashlane's finance function into a true engine of strategic partnership, I’m doing a webinar with FinQore on Tuesday, May 12th. Register here - hope to see you there!